CameraHRV: Robust measurement of heart rate variability using a camera

Amruta Pai, Ashok Veeraraghavan, and Ashutosh Sabharwal, CameraHRV: Robust measurement of heart rate variability using a camera. SPIE BIOS (2018)

New Dataset – relevant for distancePPG, PulseCam and CameraHRV – see information below

TEAM MEMBERS:

Amruta Pai, Graduate Student, ECE

Dr. Ashok Veeraraghavan, Associate Professor, ECE

Dr. Ashutosh Sabharwal, Professor, ECE

Overview: A Robust method to extract HRV using a camera

The inter-beat-interval (the time period of the cardiac cycle) changes slightly for every heartbeat; this variation is measured as Heart Rate Variability (HRV). HRV is presumed to occur due to interactions between the parasympathetic and sympathetic nervous system. Therefore, it is sometimes used as an indicator of the stress level of an individual. HRV also reveals some clinical information about cardiac health.

THE PROBLEM: Currently, HRV is accurately measured using contact devices such as a pulse oximeter. However, recent research in the field of non-contact imaging Photoplethysmography (iPPG) has made vital sign measurements using just the video recording of any exposed skin (such as a person’s face) possible. The current signal processing methods for extracting HRV using peak detection perform well for contact-based systems but have poor performance for the iPPG signals. The main reason for this poor performance is the fact that current methods are sensitive to large noise sources which are often present in iPPG data. Further, current methods are not robust to motion artifacts that are common in iPPG systems.

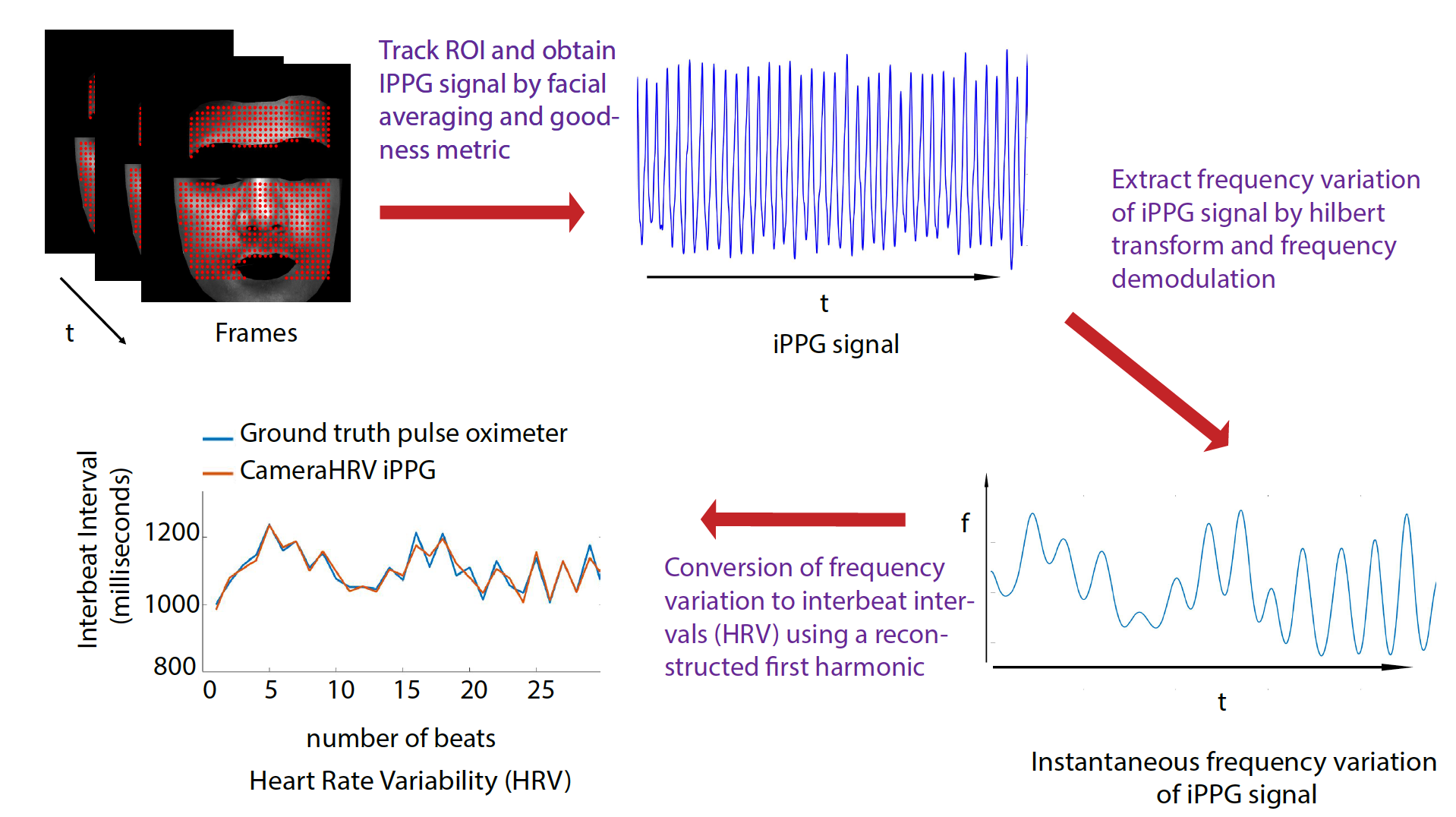

OUR SOLUTION: We developed a new algorithm, CameraHRV, for robustly extracting HRV even in low SNR such as is common with iPPG recordings. CameraHRV combined spatial combination and frequency demodulation to obtain HRV from the instantaneous frequency of the iPPG signal. CameraHRV outperforms other current methods of HRV estimation. Ground truth data was obtained from FDA-approved pulse oximeter for validation purposes. CameraHRV on iPPG data showed an error of 6 milliseconds for low motion and varying skin tone scenarios. The improvement in error was 14%. In case of high motion scenarios like reading, watching and talking, the error was 10 milliseconds.

DATASET: This was not the dataset used in the paper, as the original paper dataset did not have consent from participants to share the data. We have collected a new dataset, with proper consent.

- 2 min video recording stationary with normal breathing

- 2 min video recording reading a webpage about funny facts

- 2 min video recording watching an informative video

- 2 min video recording of talking

- 2 min video deep breathing exercise. (inhalation for 6 seconds and exhalation for 5 seconds)

2) Amruta Pai, Ashok Veeraraghavan, and Ashutosh Sabharwal, CameraHRV: Robust measurement of heart rate variability using a camera. SPIE BIOS (2018)

Please download the dataset here.